由于篇幅受限,CSDN不能发布超过一定次数的文章,故在此给出上一篇链接:【深度学习】diffusion原理解析

3.2、目标函数求解

里面的最后一项, q ( x T ∣ x 0 ) q(x_T|x_0) q(xT∣x0)我们前面提到过,其近似服从标准正态,而对于 P ( x T ) P(x_T) P(xT),我们是假定为标准正态,这两项都可以求出来,所以没有任何可学习的参数

真正需要优化的是第一项和第二项。第一项就是重构损失;而第二项,是KL散度。里面的 P ( x t − 1 ∣ x t ) P(x_{t-1}|x_t) P(xt−1∣xt)需要用神经网络去逼近。

论文提到, q ( x t − 1 ∣ x t ) q(x_{t-1}|x_t) q(xt−1∣xt)是正态分布,但由于 q ( x t − 1 ∣ x t ) q(x_{t-1}|x_t) q(xt−1∣xt)是无法求出来的,所以选择用 P ( x t − 1 ∣ x t ) P(x_{t-1}|x_t) P(xt−1∣xt)去逼近

q ( x t − 1 ∣ x t , x 0 ) q(x_{t-1}|x_t,x_0) q(xt−1∣xt,x0)服从正态分布(证明),我们可以求出来。

直接把 q ( x t − 1 ∣ x t , x 0 ) q(x_{t-1}|x_t,x_0) q(xt−1∣xt,x0)配成正态分布求解期望和方差比较麻烦,我们不如反过来推

假设多维高斯分布P(x),我们有

P ( x ) = 1 ( 2 π ) p 2 ∣ Σ ∣ 1 2 exp { − 1 2 ( x − μ ) T Σ − 1 ( x − μ ) } = 1 ( 2 π ) p 2 ∣ Σ ∣ 1 2 exp { − 1 2 ( x T Σ − 1 x − μ T Σ − 1 x − x T Σ − 1 μ + μ Σ − 1 μ ) } = 1 ( 2 π ) p 2 ∣ Σ ∣ 1 2 exp { − 1 2 ( x T Σ − 1 x − 2 μ T Σ − 1 x + μ Σ − 1 μ ) } (11) \begin{aligned} P(x)=&\frac{1}{(2\pi)^{\frac{p}{2}}|\Sigma|^{\frac{1}{2}}}\exp\left\{-\frac{1}{2}(x-\mu)^T\Sigma^{-1}(x-\mu)\right\} \\=&\frac{1}{(2\pi)^{\frac{p}{2}}|\Sigma|^{\frac{1}{2}}}\exp\left\{-\frac{1}{2}(x^T\Sigma^{-1}x-\mu^T\Sigma^{-1}x-x^T\Sigma^{-1}\mu+\mu\Sigma^{-1}\mu)\right\} \\=&\frac{1}{(2\pi)^{\frac{p}{2}}|\Sigma|^{\frac{1}{2}}}\exp\left\{-\frac{1}{2}(x^T\Sigma^{-1}x-2\mu^T\Sigma^{-1}x+\mu\Sigma^{-1}\mu)\right\} \nonumber\end{aligned}\tag{11} P(x)===(2π)2p∣Σ∣211exp{−21(x−μ)TΣ−1(x−μ)}(2π)2p∣Σ∣211exp{−21(xTΣ−1x−μTΣ−1x−xTΣ−1μ+μΣ−1μ)}(2π)2p∣Σ∣211exp{−21(xTΣ−1x−2μTΣ−1x+μΣ−1μ)}(11)

对于随机变量x,里面有关的只有 x T Σ − 1 x x^T\Sigma^{-1}x xTΣ−1x和 2 μ T Σ − 1 x 2\mu^T\Sigma^{-1}x 2μTΣ−1x。其中第一项有两个x,为二次项。第二项有一个x,为一次项。

同理,对于 q ( x t − 1 ∣ x t , x 0 ) q(x_{t-1}|x_t,x_0) q(xt−1∣xt,x0),我们只需要找出对应的一次项跟二次项,就能够得出期望跟协方差了

q ( x t − 1 ∣ x t , x 0 ) = q ( x t − 1 , x t ∣ x 0 ) q ( x t ∣ x 0 ) = q ( x t ∣ x t − 1 , x 0 ) q ( x t − 1 ∣ x 0 ) q ( x t ∣ x 0 ) = q ( x t ∣ x t − 1 ) q ( x t − 1 ∣ x 0 ) q ( x t ∣ x 0 ) = N ( α t x t − 1 , ( 1 − α t ) I ) N ( α ˉ t − 1 x 0 , ( 1 − α ˉ t − 1 ) I ) N ( α ˉ t x 0 , ( 1 − α ˉ t ) I ) ∝ exp − { ( x t − α t x t − 1 ) T ( x t − α t x t − 1 ) 2 ( 1 − α t ) + ( x t − 1 − α ˉ t − 1 x 0 ) T ( x t − 1 − α ˉ t − 1 x 0 ) 2 ( 1 − α ˉ t − 1 ) − ( x t − α ˉ t x 0 ) T ( x t − α ˉ t x 0 ) 2 ( 1 − α ˉ t ) } = exp − { x t T x t − 2 α t x t T x t − 1 + α t x t − 1 T x t − 1 2 ( 1 − α t ) + x t − 1 T x t − 1 − 2 α ˉ t − 1 x 0 T x t − 1 + α t − 1 x 0 T x 0 2 ( 1 − α ˉ t − 1 ) − ( x t − α ˉ t x 0 ) T ( x t − α ˉ t x 0 ) 2 ( 1 − α ˉ t ) } = exp { − 1 2 ( x t − 1 T 1 − α ˉ t β t ( 1 − α ˉ t − 1 ) x t − 1 − 2 α t ( 1 − α ˉ t − 1 ) x t T + α ˉ t − 1 ( 1 − α t ) x 0 T 1 − α ˉ t 1 − α ˉ t β t ( 1 − α ˉ t − 1 ) ) x t − 1 + C } 由式( 11 )可得 \begin{aligned}q(x_{t-1}|x_t,x_0)=&\frac{q(x_{t-1},x_{t}|x_0)}{q(x_t|x_0)}\\=&\frac{q(x_t|x_{t-1},x_0)q(x_{t-1}|x_0)}{q(x_t|x_0)}\\=&\frac{q(x_t|x_{t-1})q(x_{t-1}|x_0)}{q(x_t|x_0)}\\=&\frac{N(\sqrt{\alpha_t}x_{t-1},(1-\alpha_t)I)N(\sqrt{\bar\alpha_{t-1}}x_{0},(1-\bar\alpha_{t-1})I)}{N(\sqrt{\bar\alpha_t}x_{0},(1-\bar\alpha_t)I)}\\\propto&\exp -\left\{\frac{(x_t-\sqrt{\alpha_t}x_{t-1})^T(x_t-\sqrt{\alpha_t}x_{t-1})}{2(1-\alpha_t)}+\frac{(x_{t-1}-\sqrt{\bar\alpha_{t-1}}x_{0})^T(x_{t-1}-\sqrt{\bar\alpha_{t-1}}x_{0})}{2(1-\bar\alpha_{t-1})}-\frac{(x_{t}-\sqrt{\bar\alpha_{t}}x_{0})^T(x_{t}-\sqrt{\bar\alpha_{t}}x_{0})}{2(1-\bar\alpha_{t})}\right\}\\=&\exp-\left\{\frac{x_t^Tx_t-2\sqrt{\alpha_t}x_{t}^Tx_{t-1}+\alpha_tx_{t-1}^Tx_{t-1}}{2(1-\alpha_t)}+\frac{x_{t-1}^Tx_{t-1}-2\sqrt{\bar\alpha_{t-1}}x_0^Tx_{t-1}+\alpha_{t-1}x_0^Tx_0}{2(1-\bar\alpha_{t-1})}-\frac{(x_{t}-\sqrt{\bar\alpha_{t}}x_{0})^T(x_{t}-\sqrt{\bar\alpha_{t}}x_{0})}{2(1-\bar\alpha_{t})}\right\}\\=&\exp\left\{-\frac{1}{2}\left(x_{t-1}^T\frac{1-\bar\alpha_t}{\beta_t(1-\bar\alpha_{t-1})}x_{t-1}-2\frac{\sqrt{\alpha_t}(1-\bar\alpha_{t-1})x_t^T+\sqrt{\bar\alpha_{t-1}}(1-\alpha_t)x_0^T}{1-\bar\alpha_t}\frac{1-\bar\alpha_t}{\beta_t(1-\bar\alpha_{t-1})}\right)x_{t-1}+C\right\}\end{aligned}\nonumber由式(11)可得 q(xt−1∣xt,x0)====∝==q(xt∣x0)q(xt−1,xt∣x0)q(xt∣x0)q(xt∣xt−1,x0)q(xt−1∣x0)q(xt∣x0)q(xt∣xt−1)q(xt−1∣x0)N(αˉtx0,(1−αˉt)I)N(αtxt−1,(1−αt)I)N(αˉt−1x0,(1−αˉt−1)I)exp−{2(1−αt)(xt−αtxt−1)T(xt−αtxt−1)+2(1−αˉt−1)(xt−1−αˉt−1x0)T(xt−1−αˉt−1x0)−2(1−αˉt)(xt−αˉtx0)T(xt−αˉtx0)}exp−{2(1−αt)xtTxt−2αtxtTxt−1+αtxt−1Txt−1+2(1−αˉt−1)xt−1Txt−1−2αˉt−1x0Txt−1+αt−1x0Tx0−2(1−αˉt)(xt−αˉtx0)T(xt−αˉtx0)}exp{−21(xt−1Tβt(1−αˉt−1)1−αˉtxt−1−21−αˉtαt(1−αˉt−1)xtT+αˉt−1(1−αt)x0Tβt(1−αˉt−1)1−αˉt)xt−1+C}由式(11)可得

q ( x t − 1 ∣ x t , x 0 ) ∼ N ( x t − 1 ∣ a t ( 1 − α ˉ t − 1 ) x t + α ˉ t − 1 ( 1 − α t ) x 0 1 − α ˉ t , 1 − α ˉ t − 1 1 − α ˉ t β t I ) q(x_{t-1}|x_t,x_0)\sim N(x_{t-1}|\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t+\sqrt{\bar\alpha_{t-1}}(1-\alpha_t)x_0}{1-\bar\alpha_t},\frac{1-\bar\alpha_{t-1}}{1-\bar\alpha_t}\beta_tI) q(xt−1∣xt,x0)∼N(xt−1∣1−αˉtat(1−αˉt−1)xt+αˉt−1(1−αt)x0,1−αˉt1−αˉt−1βtI)

再简单变化一下,可得

q ( x t − 1 ∣ x t , x 0 ) ∼ N ( x t − 1 ∣ a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t x 0 1 − α ˉ t , 1 − α ˉ t − 1 1 − α ˉ t β t I ) q(x_{t-1}|x_t,x_0)\sim N(x_{t-1}|\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_t x_0}{1-\bar\alpha_t},\frac{1-\bar\alpha_{t-1}}{1-\bar\alpha_t}\beta_tI) q(xt−1∣xt,x0)∼N(xt−1∣1−αˉtat(1−αˉt−1)xt+1−αˉtαˉt−1βtx0,1−αˉt1−αˉt−1βtI)

那么接下来,就可以求解 K L ( q ( x t − 1 ∣ x t , x 0 ) ∣ ∣ P ( x t − 1 ∣ x t ) ) KL(q(x_{t-1}|x_t,x_0)||P(x_{t-1}|x_t)) KL(q(xt−1∣xt,x0)∣∣P(xt−1∣xt))

记 q ( x t − 1 ∣ x t , x 0 ) ∼ N ( x t − 1 ∣ μ ϕ t − 1 , Σ ϕ t − 1 ) q(x_{t-1}|x_{t},x_0)\sim N(x_{t-1}|\mu_\phi^{t-1},\Sigma_\phi^{t-1}) q(xt−1∣xt,x0)∼N(xt−1∣μϕt−1,Σϕt−1),为了简便,我隐去t-1时刻,记作 q ( x t − 1 ∣ x t , x 0 ) ∼ N ( x t − 1 ∣ μ ϕ , Σ ϕ ) q(x_{t-1}|x_{t},x_0)\sim N(x_{t-1}|\mu_\phi,\Sigma_\phi) q(xt−1∣xt,x0)∼N(xt−1∣μϕ,Σϕ)

由于 q ( x t − 1 ∣ x t , x 0 ) q(x_{t-1}|x_t,x_0) q(xt−1∣xt,x0)的协方差与 x 0 , x t x_0,x_t x0,xt无关,是一个固定的值。

所以,设 P ( x t − 1 ∣ x t ) ∼ N ( x t − 1 ∣ μ θ t − 1 , Σ θ t − 1 ) P(x_{t-1}|x_t)\sim N(x_{t-1}|\mu_{\theta}^{t-1},\Sigma_{\theta}^{t-1}) P(xt−1∣xt)∼N(xt−1∣μθt−1,Σθt−1),为了简便,我依然隐去时刻,表达为 P ( x t − 1 ∣ x t ) ∼ N ( x t − 1 ∣ μ θ , Σ θ ) P(x_{t-1}|x_t)\sim N(x_{t-1}|\mu_{\theta},\Sigma_{\theta}) P(xt−1∣xt)∼N(xt−1∣μθ,Σθ)

里面的 Σ θ \Sigma_\theta Σθ直接等于 q ( x t − 1 ∣ x t , x 0 ) q(x_{t-1}|x_t,x_0) q(xt−1∣xt,x0)的协方差,也就是 Σ ϕ = Σ θ \Sigma_\phi=\Sigma_\theta Σϕ=Σθ

下面给出两个正态分布的KL散度公式

其中,n表示随机变量x的维度

推导请看参考③

直接代入公式可得:

K L ( q ( x t − 1 ∣ x t , x 0 ) ∣ ∣ P ( x t − 1 ∣ x 0 ) ) = 1 2 [ ( μ ϕ − μ θ ) T Σ θ − 1 ( μ ϕ − μ θ ) − log det ( Σ θ − 1 Σ ϕ ) + T r ( Σ θ − 1 Σ ϕ ) − n ] = 1 2 [ ( μ ϕ − μ θ ) T Σ θ − 1 ( μ ϕ − μ θ ) − log 1 + n − n ] = 1 2 [ ( μ ϕ − μ θ ) T Σ θ − 1 ( μ ϕ − μ θ ) ] = 1 2 σ t 2 [ ∣ ∣ μ ϕ − μ θ ( x t , t ) ∣ ∣ 2 ] (12) \begin{aligned}KL(q(x_{t-1}|x_t,x_0)||P(x_{t-1}|x_0))=&\frac{1}{2}\left[(\mu_\phi-\mu_\theta)^T\Sigma_\theta^{-1}(\mu_\phi-\mu_\theta)-\log \det(\Sigma_\theta^{-1}\Sigma_\phi)+Tr(\Sigma_\theta^{-1}\Sigma_\phi)-n\right]\\=&\frac{1}{2}\left[(\mu_\phi-\mu_\theta)^T\Sigma_\theta^{-1}(\mu_\phi-\mu_\theta)-\log1+n-n\right]\\=&\frac{1}{2}\left[(\mu_\phi-\mu_\theta)^T\Sigma_\theta^{-1}(\mu_\phi-\mu_\theta)\right]\\=&\frac{1}{2\sigma^2_t}\left[||\mu_\phi-\mu_\theta(x_t,t)||^2\right]\end{aligned}\tag{12} KL(q(xt−1∣xt,x0)∣∣P(xt−1∣x0))====21[(μϕ−μθ)TΣθ−1(μϕ−μθ)−logdet(Σθ−1Σϕ)+Tr(Σθ−1Σϕ)−n]21[(μϕ−μθ)TΣθ−1(μϕ−μθ)−log1+n−n]21[(μϕ−μθ)TΣθ−1(μϕ−μθ)]2σt21[∣∣μϕ−μθ(xt,t)∣∣2](12)

σ t 2 \sigma_t^2 σt2是方差 Σ θ \Sigma_\theta Σθ的表达,由于是给定的,所以为了简单起见,写成这样。

但论文里面对他进行了比较,不论 σ t 2 \sigma_t^2 σt2直接取成 Σ θ \Sigma_\theta Σθ,还是 β t 、 β ˉ \beta_t、\bar \beta βt、βˉ,都得到了差不多的实验结果

所以,便得到了最终的损失函数

在论文中,还将该损失函数写成了其他形式,我们前面写到

μ ϕ = a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t x 0 1 − α ˉ t (13) \mu_\phi=\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_t x_0}{1-\bar\alpha_t}\tag{13} μϕ=1−αˉtat(1−αˉt−1)xt+1−αˉtαˉt−1βtx0(13)

那么对于 P ( x t − 1 ∣ x t ) P(x_{t-1}|x_t) P(xt−1∣xt)而言,里面其实只有一个未知数,也就是 x 0 x_0 x0,所以,我们只需要让神经网络预测 x 0 x_0 x0就可以了,记神经网络预测的 x 0 x_0 x0为 f θ ( x t , t ) f_\theta(x_t,t) fθ(xt,t)所以式(12)可进行如下变化:

1 2 σ i 2 [ ∣ ∣ μ ϕ − μ θ ( x t , t ) ∣ ∣ 2 ] = 1 2 σ t 2 [ ∣ ∣ ( a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t x 0 1 − α ˉ t ) − ( a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t f θ ( x t , t ) 1 − α ˉ t ∣ ∣ 2 ) ] = α ˉ t − 1 β t 2 2 σ t 2 ( 1 − α ˉ t ) 2 [ ∣ ∣ x 0 − f θ ( x t , t ) ∣ ∣ 2 ] (14) \begin{aligned}\frac{1}{2\sigma^2_i}\left[||\mu_\phi-\mu_\theta(x_t,t)||^2\right]=&\frac{1}{2\sigma^2_t}\left[||\left(\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_t x_0}{1-\bar\alpha_t}\right)-\left(\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_t f_\theta(x_t,t)}{1-\bar\alpha_t}||^2\right)\right]\\=&\frac{\bar\alpha_{t-1}\beta_t^2}{2\sigma^2_t(1-\bar\alpha_t)^2}\left[||x_0-f_\theta(x_t,t)||^2\right]\end{aligned}\tag{14} 2σi21[∣∣μϕ−μθ(xt,t)∣∣2]==2σt21[∣∣(1−αˉtat(1−αˉt−1)xt+1−αˉtαˉt−1βtx0)−(1−αˉtat(1−αˉt−1)xt+1−αˉtαˉt−1βtfθ(xt,t)∣∣2)]2σt2(1−αˉt)2αˉt−1βt2[∣∣x0−fθ(xt,t)∣∣2](14)

除此之外,还可以去预测噪声

由

x t = α ˉ t x 0 + 1 − α ˉ t ϵ t → x 0 = x t − 1 − α ˉ t ϵ t α ˉ t (14) x_t=\sqrt{\bar\alpha_t}x_{0}+\sqrt{1-\bar\alpha_t}\epsilon_t\rightarrow x_0=\frac{x_t-\sqrt{1-\bar\alpha_t}\epsilon_t}{\sqrt{\bar\alpha_t}}\tag{14} xt=αˉtx0+1−αˉtϵt→x0=αˉtxt−1−αˉtϵt(14)

将 x 0 x_0 x0代入式(13)

μ ϕ = a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t 1 − α ˉ t x t − 1 − α ˉ t ϵ t α ˉ t = a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + α ˉ t − 1 β t x t ( 1 − α ˉ t ) α ˉ t − α ˉ t − 1 β t 1 − α ˉ t ϵ t ( 1 − α ˉ t ) α ˉ t = a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + β t x t ( 1 − α ˉ t ) α t − β t 1 − α ˉ t ϵ t ( 1 − α ˉ t ) α t = 1 α t [ a t ( 1 − α ˉ t − 1 ) x t 1 − α ˉ t + β t x t 1 − α ˉ t − β t 1 − α ˉ t ϵ t 1 − α ˉ t ] = 1 α t [ ( α t ( 1 − α ˉ t − 1 ) + β t 1 − α ˉ t ) x t − β t 1 − α ˉ t ϵ t 1 − α ˉ t ] = 1 α t [ x t − β t 1 − α ˉ t ϵ t ] (15) \begin{aligned}\mu_\phi=&\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_t}{1-\bar\alpha_t}\frac{x_t-\sqrt{1-\bar\alpha_t}\epsilon_t}{\sqrt{\bar\alpha_t}}\\=&\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\sqrt{\bar\alpha_{t-1}}\beta_tx_t}{(1-\bar\alpha_t)\sqrt{\bar\alpha_t}}-\frac{\sqrt{\bar\alpha_{t-1}}\beta_t\sqrt{1-\bar\alpha_t}\epsilon_t}{(1-\bar\alpha_t)\sqrt{\bar\alpha_t}}\\=&\frac{\sqrt{a_t}(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\beta_tx_t}{(1-\bar\alpha_t)\sqrt\alpha_t}-\frac{\beta_t\sqrt{1-\bar\alpha_t}\epsilon_t}{(1-\bar\alpha_t)\sqrt\alpha_t}\\=&\frac{1}{\sqrt{\alpha_t}}\left[\frac{a_t(1-\bar\alpha_{t-1})x_t}{1-\bar\alpha_t}+\frac{\beta_tx_t}{1-\bar\alpha_t}-\frac{\beta_t\sqrt{1-\bar\alpha_t}\epsilon_t}{1-\bar\alpha_t}\right]\\=&\frac{1}{\sqrt{\alpha_t}}\left[\left(\frac{\alpha_t(1-\bar\alpha_{t-1})+\beta_t}{1-\bar\alpha_t}\right)x_t-\frac{\beta_t\sqrt{1-\bar\alpha_t}\epsilon_t}{1-\bar\alpha_t}\right]\\=&\frac{1}{\sqrt{\alpha_t}}\left[x_t-\frac{\beta_t}{\sqrt{1-\bar\alpha_t}}\epsilon_t\right]\end{aligned}\tag{15} μϕ======1−αˉtat(1−αˉt−1)xt+1−αˉtαˉt−1βtαˉtxt−1−αˉtϵt1−αˉtat(1−αˉt−1)xt+(1−αˉt)αˉtαˉt−1βtxt−(1−αˉt)αˉtαˉt−1βt1−αˉtϵt1−αˉtat(1−αˉt−1)xt+(1−αˉt)αtβtxt−(1−αˉt)αtβt1−αˉtϵtαt1[1−αˉtat(1−αˉt−1)xt+1−αˉtβtxt−1−αˉtβt1−αˉtϵt]αt1[(1−αˉtαt(1−αˉt−1)+βt)xt−1−αˉtβt1−αˉtϵt]αt1[xt−1−αˉtβtϵt](15)

此时我们发现,对于 P ( x t − 1 ∣ x t ) P(x_{t-1}|x_t) P(xt−1∣xt)中,只剩下 ϵ t \epsilon_t ϵt是未知数,所以,我们用神经网络去预测噪声

1 2 σ i 2 [ ∣ ∣ μ ϕ − μ θ ( x t , t ) ∣ ∣ 2 ] = 1 2 σ t 2 ∣ ∣ 1 α t ( x t − β t 1 − α ˉ t ϵ t ) − 1 α t ( x t − β t 1 − α ˉ t ϵ θ ( x t , t ) ) ∣ ∣ 2 = β t 2 2 σ t 2 ( 1 − α ˉ t ) α t ∣ ∣ ϵ t − ϵ θ ( x t , t ) ∣ ∣ 2 \begin{aligned}\frac{1}{2\sigma^2_i}\left[||\mu_\phi-\mu_\theta(x_t,t)||^2\right]=&\frac{1}{2\sigma_t^2}||\frac{1}{\sqrt{\alpha_t}}\left(x_t-\frac{\beta_t}{\sqrt{1-\bar\alpha_t}}\epsilon_t\right)-\frac{1}{\sqrt{\alpha_t}}\left(x_t-\frac{\beta_t}{\sqrt{1-\bar\alpha_t}}\epsilon_\theta(x_t,t)\right)||^2\\=&\frac{\beta_t^2}{2\sigma_t^2(1-\bar\alpha_t)\alpha_t}||\epsilon_t-\epsilon_\theta(x_t,t)||^2\end{aligned}\nonumber 2σi21[∣∣μϕ−μθ(xt,t)∣∣2]==2σt21∣∣αt1(xt−1−αˉtβtϵt)−αt1(xt−1−αˉtβtϵθ(xt,t))∣∣22σt2(1−αˉt)αtβt2∣∣ϵt−ϵθ(xt,t)∣∣2

至此,我们中遇得到了KL散度的优化目标函数

接下来,我们来看重构损失

max E q ( x 1 ∣ x 0 ) [ log P ( x 0 ∣ x 1 ) ] ≈ max 1 n ∑ i = 1 n log P ( x 0 i ∣ x 1 i ) = max 1 n ∑ i = 1 n log 1 2 π D / 2 ∣ Σ θ ∣ 1 / 2 exp { − 1 2 ( x 0 i − μ θ ( x 1 i , 1 ) ) T Σ θ − 1 ( x 0 i − μ θ ( x 1 i , 1 ) ) } = max 1 n ∑ i = 1 n log 1 2 π D / 2 ∣ Σ θ ∣ 1 / 2 − 1 2 ( x 0 i − μ θ ( x 1 i , 1 ) ) T Σ θ − 1 ( x 0 i − μ θ ( x 1 i , 1 ) ) ∝ min 1 n ∑ i = 1 n ( x 0 i − μ θ ( x 1 i , 1 ) ) T ( x 0 i − μ θ ( x 1 i , 1 ) ) = min 1 n ∑ i = 1 n ∣ ∣ x 0 i − μ θ ( x 1 i , 1 ) ∣ ∣ 2 \begin{aligned}\max \mathbb{E}_{q(x_1|x_0)}[\log P(x_0|x_1)]\approx&\max\frac{1}{n}\sum\limits_{i=1}^n\log P(x_0^i|x_1^i)\\=&\max\frac{1}{n}\sum\limits_{i=1}^n\log \frac{1}{{2\pi }^{D/2}|\Sigma_\theta|^{1/2}}\exp\left\{-\frac{1}{2}(x_0^i-\mu_\theta(x_1^i,1))^T\Sigma_\theta^{-1}(x_0^i-\mu_\theta(x_1^i,1))\right\}\\=&\max\frac{1}{n}\sum\limits_{i=1}^n\log \frac{1}{{2\pi }^{D/2}|\Sigma_\theta|^{1/2}}-\frac{1}{2}(x_0^i-\mu_\theta(x_1^i,1))^T\Sigma_\theta^{-1}(x_0^i-\mu_\theta(x_1^i,1))\\\propto &\min \frac{1}{n}\sum\limits_{i=1}^n(x_0^i-\mu_\theta(x_1^i,1))^T(x_0^i-\mu_\theta(x_1^i,1))\\=&\min \frac{1}{n}\sum\limits_{i=1}^n||x_0^i-\mu_\theta(x_1^i,1)||^2\end{aligned} maxEq(x1∣x0)[logP(x0∣x1)]≈==∝=maxn1i=1∑nlogP(x0i∣x1i)maxn1i=1∑nlog2πD/2∣Σθ∣1/21exp{−21(x0i−μθ(x1i,1))TΣθ−1(x0i−μθ(x1i,1))}maxn1i=1∑nlog2πD/2∣Σθ∣1/21−21(x0i−μθ(x1i,1))TΣθ−1(x0i−μθ(x1i,1))minn1i=1∑n(x0i−μθ(x1i,1))T(x0i−μθ(x1i,1))minn1i=1∑n∣∣x0i−μθ(x1i,1)∣∣2

将式(14)的 x 0 x_0 x0和式(15)代入

∣ ∣ x 0 i − μ θ ( x 1 i , 1 ) ∣ ∣ 2 = ∣ ∣ x 1 − 1 − α ˉ 1 ϵ 1 α ˉ 1 − 1 α 1 [ x 1 − β 1 1 − α ˉ 1 ϵ θ ( x 1 , 1 ) ] ∣ ∣ 2 ∝ ∣ ∣ ϵ θ ( x 1 , 1 ) − ϵ 1 ∣ ∣ 2 \begin{aligned}||x_0^i-\mu_\theta(x_1^i,1)||^2=&||\frac{x_1-\sqrt{1-\bar\alpha_1}\epsilon_1}{\sqrt{\bar\alpha_1}}-\frac{1}{\sqrt{\alpha_1}}\left[x_1-\frac{\beta_1}{\sqrt{1-\bar\alpha_1}}\epsilon_\theta(x_1,1)\right]||^2\\\propto&||\epsilon_\theta(x_1,1)-\epsilon_1||^2\end{aligned} ∣∣x0i−μθ(x1i,1)∣∣2=∝∣∣αˉ1x1−1−αˉ1ϵ1−α11[x1−1−αˉ1β1ϵθ(x1,1)]∣∣2∣∣ϵθ(x1,1)−ϵ1∣∣2

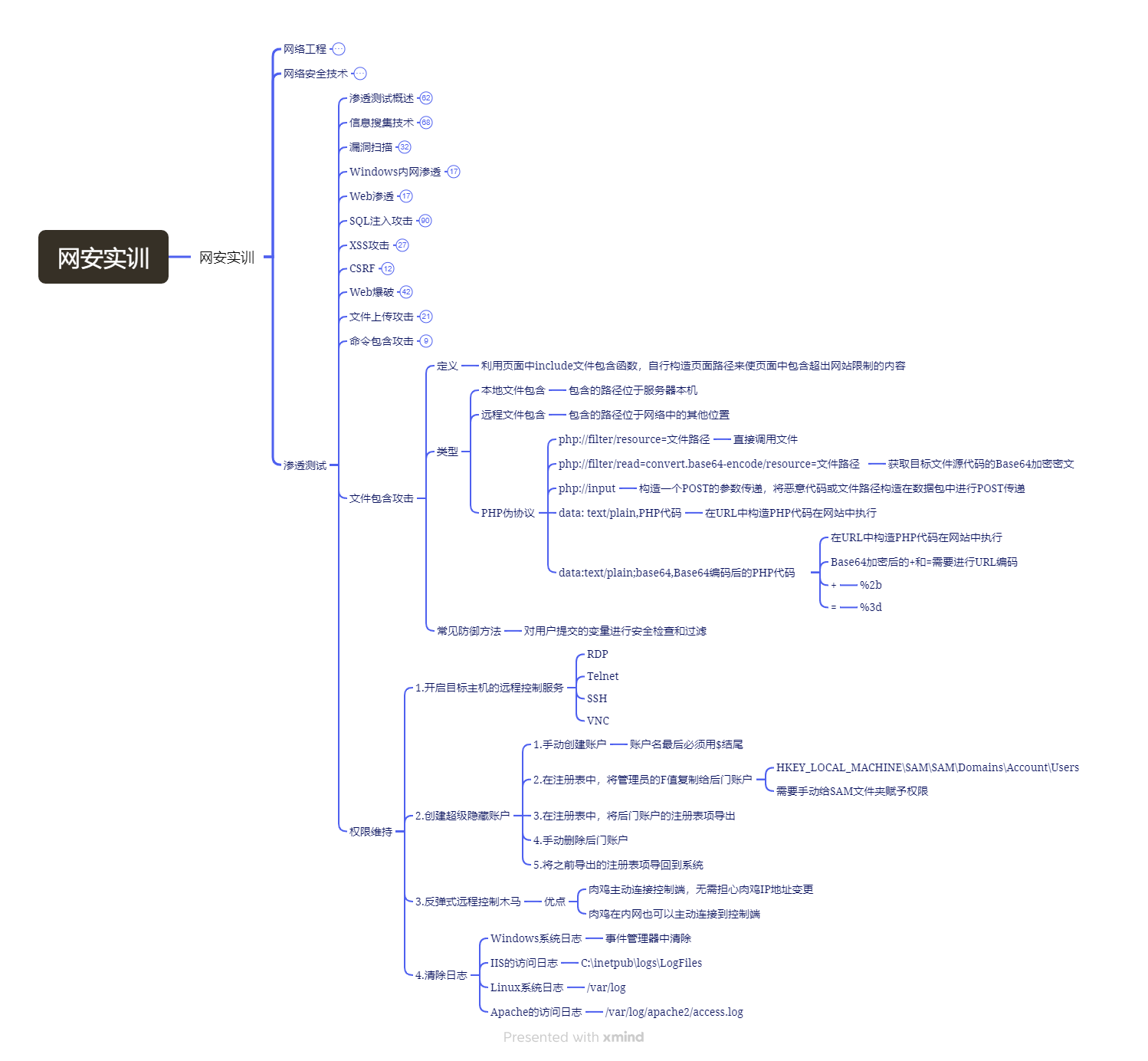

所以,最终的流程为:

4、结束

好了,以上就是本文所有内容了,如有问题,还望指出,阿里嘎多!

5、参考

①一文解释 Diffusion Model (一) DDPM 理论推导 - 知乎 (zhihu.com)

②Diffusion Model入门(8)——Denoising Diffusion Probabilistic Models(完结篇) - 知乎 (zhihu.com)

③两个多元正态分布的KL散度、巴氏距离和W距离 - 科学空间|Scientific Spaces (kexue.fm)

⑤diffusion model 最近在图像生成领域大红大紫,如何看待它的风头开始超过 GAN ? - 知乎 (zhihu.com)